Equitable Assessment Systems are Designed “to a T”

The "T" in Equity is Time

Designing for diversity requires careful attention to range.

As David Rose, one of the co-founders of The Universal Design for Learning, states: “Everyone is on the spectrum.” And there are several spectra.

So when my team thinks about how to accommodate diverse learners, we think about ways to increase flexibility within the curricular system.

That usually makes sense to people.

And then I tell them that this flexibility is achieved by increasing range, which means we think about designs that accommodate as much of a given spectrum as possible.

Generally people follow the logic.

If you keep following the logic these next design maneuvers will make sense, even if they upend the status quo ….

To achieve this kind of flexibility, we took aim at the most difficult constraint of all: time. By increasing the amount of time between the introduction of a skill or concept and its corresponding assessment, we increase the range of time available to students and teachers to prepare for success.

To accomplish this structurally within curriculum, we decoupled mastery from end-of-unit timeframes.

That liberated us to remove end-of-unit tests and summative projects altogether. Students complete performance tasks based on some content and skills practiced within a given unit, but not all. The continual flow of low-stakes assessments also increases the range of out-of-school learning opportunities by supporting students on extended hospital stays, students involved in the juvenile justice system, or during school closures.

To further accommodate the range of diverse learners, students do not demonstrate ‘mastery’ of a concept or skill just once. As the word mastery became a buzzword, its meaning was lost. Educators and assessment products erroneously suggest that mastery is a singular apex or destination that students reach once before ‘moving on.’ This misguided meaning contradicts the notions of expert and lifelong learning, where one concedes that there is always more to learn and always a way to grow.

Brilliant author and scholar Sarah Lewis steers us in the right direction in her book The Rise: Creativity, The Gift of Failure and the Search for Mastery. She observed and researched present-day and long-ago inventors, artists, scientists, explorers and athletes. Real masters are lifelong learners, always longing for the next opportunity to hone their craft. She explains:

“The pursuit of mastery is an ever onward almost....

Masters are not experts because they take a subject to its conceptual end.

They are masters because they realize that there isn't one.”Sarah Lewis, The Rise Tweet

Building on her wisdom, we move students toward chronic, positive patterns of performance rather than a singular endpoint. To accomplish this, we expand the timeframe allotted to the assessment of a given competency by requiring multiple demonstrations across multiple contexts. We also design learning experiences that balance, on a longitudinal basis, opportunities to practice the given competency between assessment moments.

Patterns gradually emerge for each student vis-à-vis each competency, thus producing diverse collections of patterns across the class. Different combinations of strengths prove successful, thus fostering equity. Students reflect on these patterns to address weaknesses, and to choose their own ways to exceed expectations. It’s a form of computational thinking to boot!

Students grow and develop in their own time, yet all within regular class time. We did not change the time spent in science class nor the number of days in the school year in order to actualize our assessment system.

By increasing the range of time within which diverse learners can demonstrate chronic patterns of performance, we take a big step closer to an equitable assessment system.

And we all but eliminate testing anxiety in the process, allowing assessment to shift from ultimate academic punishment to lifelong learning support mechanism.

I remain steadfast in my view that equity will only be achieved when school communities are willing to implement intentionally re-engineered academic structures and systems to optimize learning for all students.

High school science, and high schools more generally, have a long way to go. Discussion, teacher capacity-building, parent education, and leadership training are necessary but not sufficient.

To care is to act, and comprehensive, equitable, yet realistic designs don’t engineer themselves. I hope those readers who have been following the blog serially are beginning to feel the mission undergirding our work at EduChange.

Or Maybe the "T" is for Task Design

There is an overemphasis, indeed an over-reliance, on rubrics in schools today.

I think rubrics are useful guides when used properly, and we use them as tools in our assessment system. However, rubrics do not solve the problem of poor task design.

I have reviewed literally hundreds of secondary teachers’ tasks used as formal assessments, and I am continually reminded of how difficult it is for teachers to design high-quality tasks. Here we can define a task as anything beyond a multiple choice, T/F or matching question that requires students to build some kind of response (i.e., ranging from a diagram or essay all the way up to multi-faceted prototypes or analyses).

To bring assessment back into balance, we need to ensure that the tasks students complete are equitable and worthy of their time.

I’ll summarize some key take-aways about task design based on our own designs and our work in schools:

- Assess only what you've taught

This seems obvious, yet in practice it proves difficult to achieve while striving to make a task rigorous.

To make rigor equitable, indeed to give all students a reasonable chance of success on the assessment, students must actively grapple with the content, skills and formats required by the task in advance.

I have seen many high school teachers add an out-of-the-blue challenge, usually near the end of an exam, ‘just to see what students do with it.’ I love these types of challenges, but their place resides during regular instructional time.

Assessment tasks designed using unreasonable mystery increase test anxiety needlessly.

Tasks that require the application of a taught concept or practiced skill in a different context or scenario are fair, but assessments are no place for novelty for novelty’s sake (or for “Gotcha!” moments).

- Beware the misappropriation of 'creativity.'

I find myself referring educators to this book time and again, to help point perceptions of creativity in an appropriate direction. This 2019 report from the OECD also may shed some light on the subject.

My main message here is that true creativity arises from deep disciplinary knowledge, novel connections across disciplines, and long periods of incubation. To foster creativity, we do best to structure curricula to hyper-connect content, deepen conceptual knowledge, and teach students how to think critically and autonomously.

Our entire Integrated Science Program is designed to foster creativity. But creativity has become a bit of an educational buzzword, and administrators are eager to demonstrate to school boards that expensive technology purchases are being used to foster it.

Many people automatically attach ‘creativity’ to a work product, to ’a thing’ people create, so it is easy for creativity to be misappropriated in assessment tasks.

A common error is requiring students to demonstrate their understanding or proficiency using a medium never before used in class. For example, suggesting that students create a brochure when students have never learned about a brochure’s design, purposes or audiences is an unfair ask. The same is true for an app, platform or integration. Extending the timeframe for task completion doesn’t correct the problem.

The use of inappropriate materials in the name of creativity, which may lead to misconceptions about the content, also should be avoided. An example is the ubiquitous and misleading candy-model-of-a-cell.

Candies are inappropriate media for creativity in this context because a) cells are dynamic, not static b) late middle school and early high school students should be challenged to deal with scale and proportion, and c) there are plenty of animations, videos and cartoons that students could marshal to demonstrate their understanding of cellular phenomena with better scientific accuracy.

Please, let’s stop misappropriating creativity in assessment tasks.

- Consider 'voice and choice' carefully.

Offering students opportunities to express themselves as desired, and make choices about how to demonstrate their understanding, naturally find their way into well-crafted, authentic, right-sized performance tasks.

The focus should always be on the skills and concepts that are being assessed. Adding a choice option to a convergent task does not make it more equitable, and it may confuse or frustrate students. Likewise, requiring students to find their own app or integration, often to be deployed for the first time, does nothing to enhance the learning demonstration and is an equally problematic use of choice.

In another vein, eliminating choice where it is necessary, as in the case of assistive technologies or testing accommodations, immediately renders an assessment inequitable.

I’ll write more about task design in later posts, but it is an important aspect of assessment that needs to be highlighted. Linked here is a short piece on performance tasks we wrote for our collaborating teachers, in case it is useful in your own discussions.

We’ll let you know when the next post is ready for you!

"T" is Always for Teaching

Assessment of and for learning depends on teaching. Specifically, it is dependent on the amount of time devoted to instruction. One could make the case for direct proportionality.

For whatever reason, the assessment conversation often leaves out the main structure that supports learning: instruction. When assessment happens should be determined by the provision of ample instruction, not by how long it takes the teacher to deliver her lectures and lead the class in the planned activities. As soon as those are done, students should be ready to take the end-of-unit summative assessment, correct?

Not by a longshot.

This is perhaps the main reason why we decoupled assessment and unit/project pathway timeframes.

In a competency-based model, seat time and content coverage do not drive the bus. And to design for equity, you must stretch the time horizon to the greatest possible range (as per the first section of this post).

It is the balance of integrated and interleaved content organization, cycles of performance and feedback, and varied problem-based instructional approaches grounded in disciplinary literacy that support learning in diverse classrooms.

Sample performance tasks and assessment items can demonstrate certain question types and appropriate amounts of student guidance. However, I find that many critiques of stand-alone performance tasks, and some rubrics used to assess these assessments, fall short.

Unless we understand the classroom context, participating students, prior exposure to content, and the instructional strategies leading up to the task, evaluations of a task based only on standards alignment render an incomplete picture.

By engaging in comprehensive design, it is possible to understand what works in real classrooms, and how to use standards to inform curricular designs rather than suffocate them.

We believe in internal accountability mechanisms to keep us working toward what we say we value, and to produce work of high quality. We use published rubrics, standards, research and peer review with outside experts as QA for our own work.

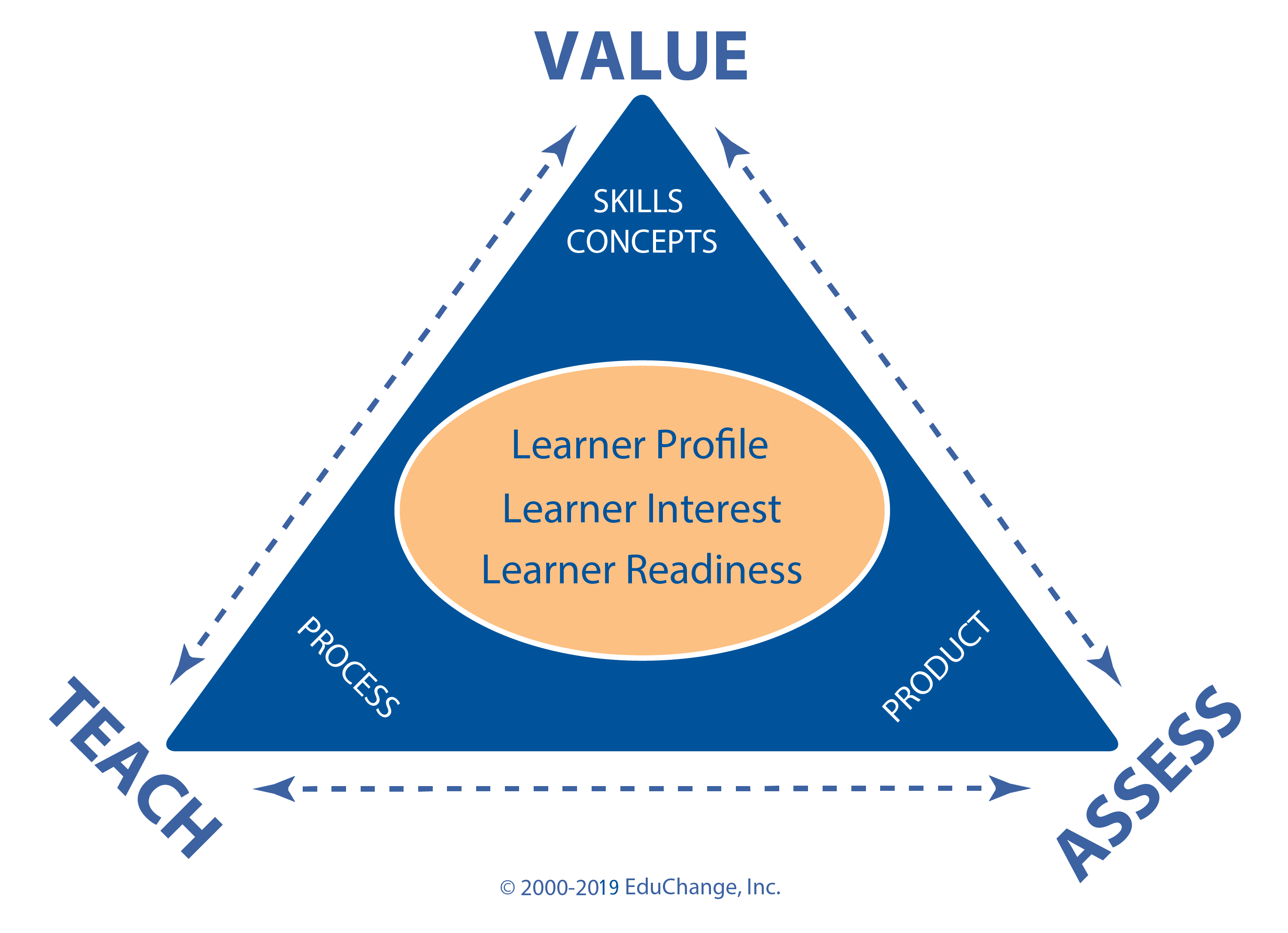

And for two decades EduChange staff have referred to this framework of checks and balances to support self-assessment of our work product.

To verbally describe it, we say, “If you value it, you must teach and assess it. If you assess it, it must be something you explicitly value and teach. If you spend time teaching it, you must assess it to demonstrate its value to students.”

All three sides of this triangle are inextricably linked and create a not-without-which set of conditions for our designs.

I often wonder would happen if policymakers kept our frameworks and standards pared down, and instead sustained decades-long iterative design cycles to ensure high-quality work products and support teacher training for classroom execution.

I wonder…

Suggested Citation: Saldutti, C. (2019, December 17). Equitable assessment systems are designed “to a T.” 1-2-3 Learn: EduChange Design Blog. https://educhange.com/equitableassessment/